dhanshri.

arrow_outward

My Resume

My Resume

My Resume

arrow_outward

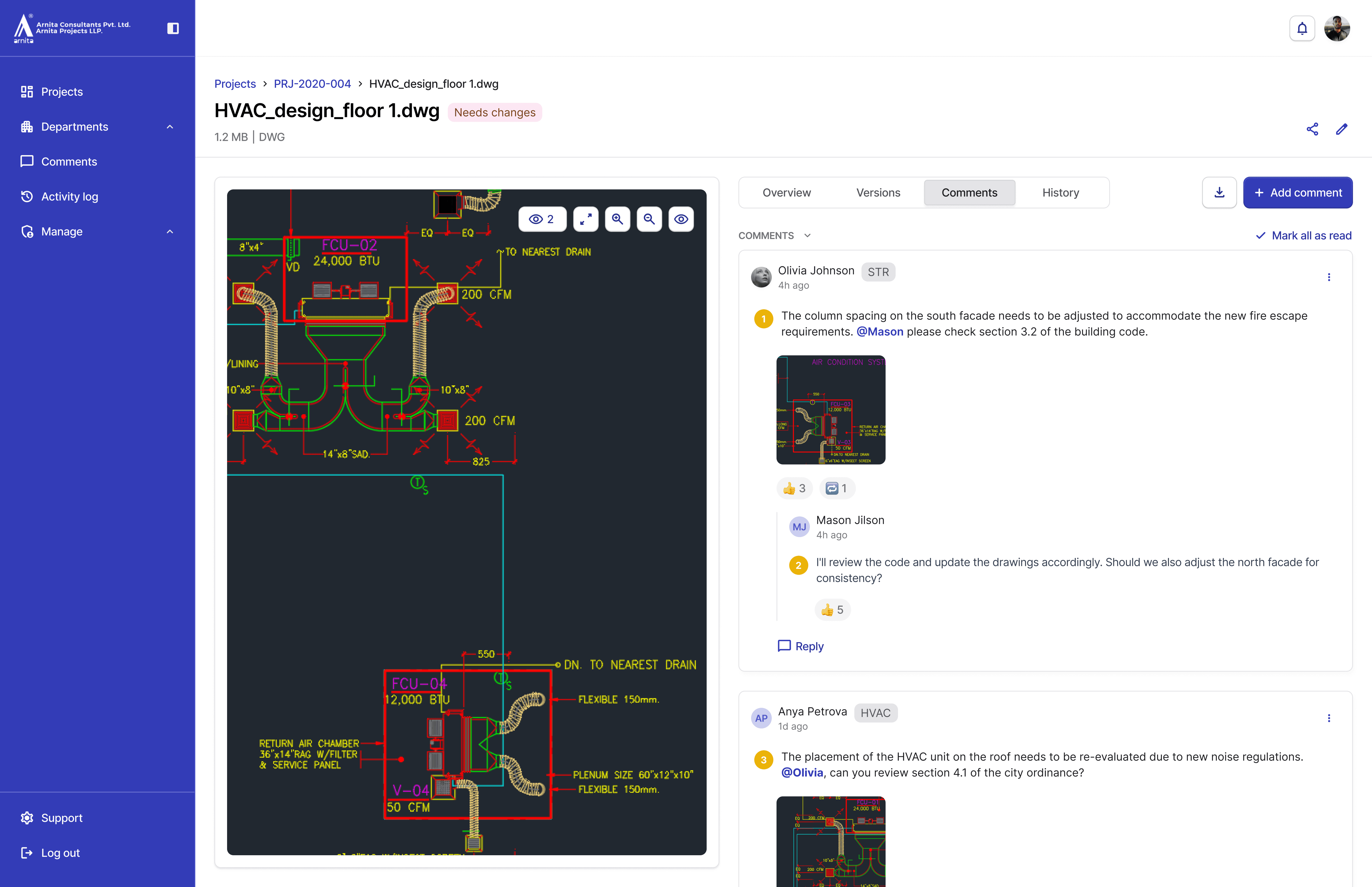

Solving cross-department coordination breakdown with a document management system

Arnita Consultants is a 100+ person architecture and engineering company, specializing in large-scale pharmaceutical projects. Six departments: Architecture, Civil, Mechanical, Electrical, HVAC, and Plumbing, collaborate on 6 to 10 simultaneous GMP-regulated projects where drawing accuracy is critical. One misrouted electrical drawing cost $20,000 in rework.

I came in as the sole product designer to build their first internal document management system from the ground up.

My Role

Sole Product Designer

Team

1 Product Manager, 2 Engineers, 6 Department Leads

Timeline

15 weeks (0->1 MVP)

Contributions

Problem scoping, User research, Information architecture, MVP prioritization, High-fidelity prototypes, Component library, Stakeholder alignment, Developer handoff

Problem

6 departments, 100+ drawings, a missing review chain, and everything running on email memory

Drawings passed through different departments sequentially, but review status lived only in email threads and people's heads.

Engineers couldn't see which version was approved or site-ready, 1 electrical drawing reached the construction site unapproved and cost $20,000 in rework.

One review loop spread across multiple email threads.

5+

Email threads per review

22

Files duplicated with unclear naming

2+ hours/week

Time lost searching the correct file

3 - 4 weeks

Per review cycle across departments

Solution Highlights

A single review system that tells every team member what's latest, who owns it, and what's been decided, without opening an email.

I built a three-layer coordination system, project browsing, file tables, and detail pages, that translated email-based workflows into structured review states with clear ownership, so teams could move reviews forward predictably.

Review visibility

See review state, ownership, and blockers, without opening a single email thread.

Version confidence

One current version across all departments. Auto-versioned on upload, with change notes required.

Traceable decisions

Every comment pinned to the version it was made on. Feedback stays in the system, not in a separate email thread.

Impact

The system shipped. Review tracking moved from email threads to a single source of truth.

I built a three-layer coordination system: project browsing, file tables with status tracking, and detailed file pages showing ownership, review state, and version history. Architecture, Structural, Electrical, and Civil departments adopted within 2 weeks.

arrow_downward

4/6 departments

Adopted the system within the first month of launch.

arrow_downward

70%

Less time spent locating files and chasing uploaders. Per person, per week.

arrow_downward

22 -> 2

Duplicate files per 100 drawings after automatic versioning shipped.

arrow_upward

60%

Faster review cycles from submission to sign-off, post-launch.

So, what made this

problem different?

Procore and BIM 360 didn't fit, enterprise licensing was too costly for an internal-only team, and neither supported the multi-department, sequential sign-off workflow. Building custom was a strategic decision, not a fallback.

Research

Mapping the problem: where drawings moved, who touched them, and where they stalled

I used three methods to build this workflow map and validate the coordination breakdown across all six departments. Workflow mapping showed the process. Interviews validated the pain. Observation quantified the cost.

Workflow mapping

Traced one project end-to-end through all 6 departments

Goal: Map the complete review workflow to identify where handoffs happened and where coordination stalled.

Stakeholder interviews

12 interviews across 6 departments revealed the same friction

Goal: Validate the mapped workflow and understand department-specific pain points and needs.

Shadow sessions

Observed engineers search for files to quantify the coordination cost

Goal: Watch the actual search-and-verify workflow in real time to measure time spent and identify friction points.

Key insights

These insights led to brainstorming on features, clustered into 3 groups: Review visibility, Version confidence, and Traceable decisions.

Research revealed a pattern: review coordination failed at every handoff because status, ownership, and version history were invisible to the system.

search

Every review started with a time consuming search

No system-level visibility into "what needs my review." They searched folders and emails to find files waiting for them.

compare_arrows

Changes required manual comparison across drawings

They toggled between versions and department layers to spot differences. Change context lived in scattered emails, not in the system.

label_important

Status existed only in people's heads

"Is this approved?" had no system answer. Employees cross-referenced 5-6 folder levels and email threads to reconstruct review state.

content_copy

No version control, just duplicates

22 duplicate files per 100 drawings with names like "final_final_v3." No way to identify the current version without opening each file.

Design strategy

The constraint wasn't just time. It was deciding what the system had to get right first.

I ran prioritization sessions with each lead using their folder structures and email chains as evidence. We deferred integrations and advanced tooling that increased engineering risk or required major process changes.

Review Visibility

Central dashboard

Search and navigation

Review status states

Activity log

Multi-reviewer routing

External BIM 360 / Procore sync

Version Confidence

Automatic versioning

Version history

Upload new version and change notes

Assigned reviewer/owner

Restore prior version

Automated clash-detection alerts

Traceable Decisions

Compare versions

Threaded comments

Decision capture

Real-time collaborative markup

E-signature stamp

Must have (Phase 1)

Won't have (Defer)

view_timeline

Core visibility before advanced automation

Why: Can't automate coordination if basic visibility doesn't exist.

The trade off: Some manual handoffs remained, but adoption was immediate.

foundation

Standalone system, defer integrations

Why: Integrations meant engineering dependencies and month-long timelines.

The trade off: Getting departments use the system quickly was more valuable than tool interoperability.

schedule

Async traceable decisions, not real-time

Why: Persistent comments solved the problem without forcing synchronous work.

The trade off: No live markup sessions, but comments worked, lower adoption friction.

Final solution

A file-first system that answers: What's in review. What's current. What is the feedback.

Feature 1:

Review Visibility: drill down to file view

A cross-department entry point that surfaces project status, workload, and deadlines upfront. It creates a predictable drill-down from project → file → version, so teams can find the right artifact fast without navigating folders or chasing updates.

Initial iteration

After testing with department leads

The first version prioritized uploading. The final prioritized reviewing. That one shift changed the entire information hierarchy.

Feature 2:

Version Confidence: Upload a new version without creating a "new file"

Versioning is auto-generated on upload, removing the human decision of "what do I name this?" Change notes are required, so context travels with the file, not in a separate email thread.

Feature 3:

Traceable Decisions: Every review decision is traceable. Who said what, on which revision.

The compare view keeps everything in one place. Comments are pinned to the version they were made on, so when R05 is uploaded, feedback from R03 stays exactly where it belongs.

Comment directly in the review flow

Reviewers comment directly on the drawing, reply in thread, and mark items resolved. Ownership is clear. Follow-ups stay inside the system.

Feedback attached to the right version

When a new revision uploads, prior feedback stays visible on the revision it belongs to.

Learnings

Three things this project taught me about 0→1 design.

Handshake

In a non-product company, trust is the real design problem.

Teams had years of muscle memory around email and folders. Showing people their own pain, before proposing a solution, mattered more than any feature decision.

fact_check

Sole designers need a decision log, not just a handoff doc.

After six weeks, I couldn't always recall why certain trade-offs were made. A running decision log would have made stakeholder reviews and the handoff sharper.

auto_awesome

Test the edges earlier.

I didn't test the compare view with HVAC before launch. I knew the zoom constraint existed: I'd flagged it, but I didn't push to validate it early enough. It cost us a hard launch week and real friction with two departments.

Next time: test the edges with the people most likely to reject them, not just the early adopters.